TCP UDP Questions

Question 1

Explanation

It is a general best practice to not mix TCP-based traffic with UDP-based traffic (especially Streaming-Video) within a single service-provider class because of the behaviors of these protocols during periods of congestion. Specifically, TCP transmitters throttle back flows when drops are detected. Although some UDP applications have application-level windowing, flow control, and retransmission capabilities, most UDP transmitters are completely oblivious to drops and, thus, never lower transmission rates because of dropping.

When TCP flows are combined with UDP flows within a single service-provider class and the class experiences congestion, TCP flows continually lower their transmission rates, potentially giving up their bandwidth to UDP flows that are oblivious to drops. This effect is called TCP starvation/UDP dominance.

TCP starvation/UDP dominance likely occurs if TCP-based applications is assigned to the same service-provider class as UDP-based applications and the class experiences sustained congestion.

Granted, it is not always possible to separate TCP-based flows from UDP-based flows, but it is beneficial to be aware of this behavior when making such application-mixing decisions within a single service-provider class.

Question 2

Question 3

Explanation

TCP Selective Acknowledgement (SACK) prevents unnecessary retransmissions by specifying successfully received subsequent data. Let’s see an example of the advantages of TCP SACK.

TCP (Normal) Acknowledgement TCP (Normal) Acknowledgement |

TCP Selective Acknowledgement |

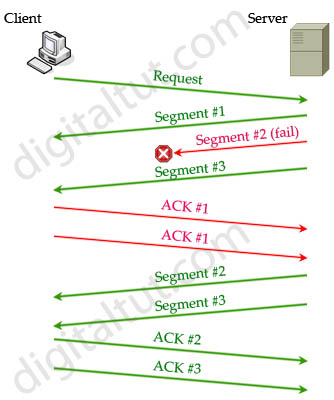

For TCP (normal) acknowledgement, when a client requests data, server sends the first three segments (named of packets at Layer 4): Segment#1,#2,#3. But suppose Segment#2 was lost somewhere on the network while Segment#3 stills reached the client. Client checks Segment#3 and realizes Segment#2 was missing so it can only acknowledge that it received Segment#1 successfully. Client received Segment#1 and #3 so it creates two ACKs#1 to alert the server that it has not received any data beyond Segment#1. After receiving these ACKs, the server must resend Segment#2,#3 and wait for the ACKs of these segments.

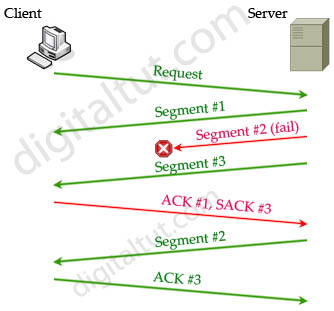

For TCP Selective Acknowledgement, the process is the same until the Client realizes Segment#2 was missing. It also sends ACK#1 but adding SACK to indicate it has received Segment#3 successfully (so no need to retransmit this segment. Therefore the server only needs to resend Segment#2 only. But notice that after receiving Segment#2, the Client sends ACK#3 (not ACK#2) to say that it had all first three segments. Now the server will continue sending Segment #4,#5, …

The SACK option is not mandatory and it is used only if both parties support it.

The TCP Explicit Congestion Notification (ECN) feature allows an intermediate router to notify end hosts of impending network congestion. It also provides enhanced support for TCP sessions associated with applications, such as Telnet, web browsing, and transfer of audio and video data that are sensitive to delay or packet loss. The benefit of this feature is the reduction of delay and packet loss in data transmissions. Use the “ip tcp ecn” command in global configuration mode to enable TCP ECN.

The TCP time-stamp option provides improved TCP round-trip time measurements. Because the time stamps are always sent and echoed in both directions and the time-stamp value in the header is always changing, TCP header compression will not compress the outgoing packet. Use the “ip tcp timestamp” command to enable the TCP time-stamp option.

The TCP Keepalive Timer feature provides a mechanism to identify dead connections. When a TCP connection on a routing device is idle for too long, the device sends a TCP keepalive packet to the peer with only the Acknowledgment (ACK) flag turned on. If a response packet (a TCP ACK packet) is not received after the device sends a specific number of probes, the connection is considered dead and the device initiating the probes frees resources used by the TCP connection.

Question 4

Explanation

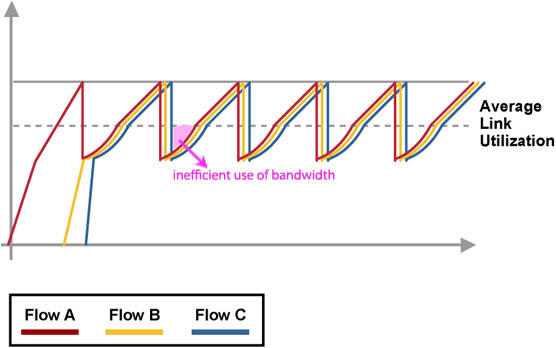

Global synchronization occurs when multiple TCP hosts reduce their transmission rates in response to congestion. But when congestion is reduced, TCP hosts try to increase their transmission rates again simultaneously (known as slow-start algorithm), which causes another congestion. Global synchronization produces this graph:

Global synchronization reduces optimal throughput of network applications and tail drop contributes to this phenomenon. When an interface on a router cannot transmit a packet immediately, the packet is queued. Packets are then taken out of the queue and eventually transmitted on the interface. But if the arrival rate of packets to the output interface exceeds the ability of the router to buffer and forward traffic, the queues increase to their maximum length and the interface becomes congested. Tail drop is the default queuing response to congestion. Tail drop simply means that “drop all the traffic that exceeds the queue limit. Tail drop treats all traffic equally and does not differentiate among classes of service.

Question 5

Explanation

When TCP is mixing with UDP under congestion, TCP flows will try to lower their transmission rate while UDP flows continue transmitting as usual. As a result of this, UDP flows will dominate the bandwidth of the link and this effect is called TCP-starvation/UDP-dominance. This can increase latency and lower the overall throughput.

Question 6

Question 7

Question 8

Explanation

If the speed of an interface is equal or less than 768 kbps (half of a T1 link), it is considered a low-speed interface. The half T1 only offers enough bandwidth to allow voice packets to enter and leave without delay issues. Therefore if the speed of the link is smaller than 768 kbps, it should not be configured with a queue.

Question 9

Question 10

Explanation

First we need to understand about bandwidth-delay product.

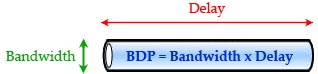

Bandwidth-delay product (BDP) is the maximum amount of data “in-transit” at any point in time, between two endpoints. In other words, it is the amount of data “in flight” needed to saturate the link. You can think the link between two devices as a pipe. The cross section of the pipe represents the bandwidth and the length of the pipe represents the delay (the propagation delay due to the length of the pipe).

Therefore the Volume of the pipe = Bandwidth x Delay (or Round-Trip-Time). The volume of the pipe is also the BDP.

For example if the total bandwidth is 64 kbps and the RTT is 3 seconds, the formula to calculate BDP is:

BDP (bits) = total available bandwidth (bits/sec) * round trip time (sec) = 64,000 * 3 = 192,000 bits

-> BDP (bytes) = 192,000 / 8 = 24,000 bytes

Therefore we need 24KB to fulfill this link.

For your information, BDP is very important in TCP communication as it optimizes the use of bandwidth on a link. As you know, a disadvantage of TCP is it has to wait for an acknowledgment from the receiver before sending another data. The waiting time may be very long and we may not utilize full bandwidth of the link for the transmission.

Based on BDP, the sending host can increase the number of data sent on a link (usually by increasing the window size). In other words, the sending host can fill the whole pipe with data and no bandwidth is wasted.

In conclusion, if we want an optimal end-to-end delay bandwidth product, TCP must use window scaling feature so that we can fill the entire “pipe” with data.

Here are the dumps:

https://drive.google.com/drive/folders/0B21TuNHP-x2dc2U5MUlNOXFkd2c?usp=drive_web&ddrp=1

can u guys please send me the latest dumps on miguelfilipe_20_01 @ hotmail . com

Download 381QA http://www.69exam.com/300-101.html

Failed today…760 only..labs are same ..loads of new questions..all dumps outdated…study hard chaps

Hello everyone, for latest valid dump with continuous update, please contact me at steffyshirls @ gmail .com

Confirming the 21q dumps are valid.

Smashed my route exam today, 9xx used the dumps from it libraries and tut.

Hi guys, I have the valid dump with me and I’m wiling to share. Please contact me via durshen81 @ gmail .com

Smashed my route exam today, 9xx used the dumps from it libraries and tut.

Passed today, used the 539q dumps. you can find them on https://drive.google.com/open?id=0B5mAFqgydmCzQUh0SUxOdE03VGc

Thanks all, done with the router. 539q dumps from IT-Libraries are valid. Practice the labs since the ips change on the exam

Hi dear friends, I’m having the valid dump with me and I’m wiling to share. Please contact me via durshen81 @ gmail .com

I want to kn if i pass ccnp route 300-101 i will get my certificate?? Or i have to pass ccnp switch and troubleshoot then i will get certificate ccnp?

Help me guys?

Hey guy, be careful I know we are here to help one another but many people are here to abuse other. I am a victim , someone contacted me when I e mailed him to ask for a valid dump and said he had every thing that can help me prepare the exam. he asked me to pay $20 on paypal, I did that and he did not send me a file with the latest dump, vce , and pdf as he said.

that is sad, people are not honest and trying to make money in the difficulty of other.

Please if someone tells you to pay him money because he has everything(latest dum, vce and so one) that can help you pass that the exam, don’t do it, it is not true. Try to have everything directly on this site, no money asked. I am a victim many people are not honest here specially those who pretend to have every and want to share it in private asking to pay money for that.

Please don’t do that and never do that.

Hi buddies, I have the valid dump with me and I’m wiling to share. Please contact me via durshen81 @ gmail .com

Please understand the concepts even when you are going through the dumps. Otherwise, you are just going to de-value the whole certification. I recall a “CCNP” asking me when I’m delivering a Fortigate firewall – what’s a firewall for, how come it has so many ports, is it a switch…

Smashed my route exam today, 9xx used the dumps from it libraries and tut.

please, can any one passed recently tell me if this are valid questions, and what is it libraries, is it same question as this site or i must read both, I really need your advice

I think Q8 is explained not well. Could anyone append something? And could anyone explain Q2, Q6, and Q7?

For Q2: “ip tcp queuemaxTo enable window scaling to support Long Fat Networks (LFNs), the TCP window size must be more than 65,535 bytes. The remote side of the link also needs to be configured to support window scaling. If both sides are not configured with window scaling, the default maximum value of 65,535 bytes is applied.”

So, answer is A, B, as mentioned.

@Marcus, for Q8:

https://www.cisco.com/c/en/us/support/docs/voice/voice-quality/12156-voip-ov-fr-qos.html

Basically for good voice quality it’s required to have 150ms delay between two audio data packets.

Enter the serialization delay (time to place bits on wire). For 1500Byte to be placed on wire on a T1/2 (768Kbps) link ~15ms which is OK.

But for 56k, it takes 214ms to place 1500Byte of data. So like… [audiopacket1]…[audiopacket2]…[otherpacket]…[audiopacket3]. That’s >214ms between audiopacket2 and audiopacket3.

In order to help help latency sensitive data on slow links (slower than 768 typically), fragmentation is used to break packets into smaller pieces.

Without fragmentation even queueing doesn’t help much since the queue holds whole packets, it doesn’t break them up. With queing only, the serialization delay is still a problem.

That’s why on links faster than T1/2, fragmentation isn’t usually present nor desired.

lasted updated dump in July 2019, contact for sharing: cisco4career @ gmail . com

Здесь вы можете заказать копию любого сайта под ключ, недорого и качественно, при этом не тратя свое время на различные программы и фриланс-сервисы.

Клонированию подлежат сайты как на конструкторах, так и на движках:

– Tilda (Тильда)

– Wix (Викс)

– Joomla (Джумла)

– WordPress (Вордпресс)

– Bitrix (Битрикс)

и т.д.

телефон 8-996-725-20-75 звоните пишите viber watsapp

Копируются не только одностраничные сайты на подобии Landing Page, но и многостраничные. Создается полная копия сайта и настраиваются формы для отправки заявок и сообщений. Кроме того, подключается админка (админ панель), позволяющая редактировать код сайта, изменять текст, загружать изображения и документы.

Здесь вы получите весь комплекс услуг по копированию, разработке и продвижению сайта в Яндексе и Google.

Хотите узнать сколько стоит сделать копию сайта?

напишите нам

8-996-725-20-75 звоните пишите viber watsapp

Question 2

The TCP Window Scaling feature adds support for the Window Scaling option in RFC 1323, TCP Extensions for High Performance. A larger window size is recommended to improve TCP performance in network paths with large bandwidth-delay product characteristics that are called Long Fat Networks (LFNs). The TCP Window Scaling enhancement provides that support. The window scaling extension in Cisco IOS software expands the definition of the TCP window to 32 bits and then uses a scale factor to carry this 32-bit value in the 16-bit window field of the TCP header. The window size can increase to a scale factor of 14. Typical applications use a scale factor of 3 when deployed in LFNs. The TCP Window Scaling feature complies with RFC 1323. The larger scalable window size will allow TCP to perform better over LFNs. Use the ip tcp window-size command in global configuration mode to configure the TCP window size. In order for this to work, the remote host must also support this feature and its window size must be increased.